Someone just ran cat /etc/shadow in your production pod. Your runtime security agent caught it. The Kubernetes audit log shows two engineers had active exec sessions at that exact moment. You need to know who did it. You can’t.

This is not a tooling problem. Your cluster sits behind a zero trust access layer, meaning every user is cryptographically verified before they can touch the Kubernetes API. Every API call is logged with the caller’s identity attached. A runtime security agent watches every syscall at the kernel level. On paper, that looks like complete visibility.

In practice, there is a structural gap baked into Kubernetes itself that makes it impossible to attribute commands run inside a pod to the person who ran them. No amount of tooling fixes this. The gap is in the architecture.

Where Identity Disappears

When you run kubectl exec, your identity starts the journey with you. By the time a process spawns inside the container, it is gone. Here is exactly where it dies and why.

kubectl exec: The Request

kubectl sends an HTTP POST to the API server with your credentials attached. The API server authenticates the request, resolves your identity, and loads it into the request context, a Go object that lives only for the duration of that request. It then checks RBAC and writes an audit log entry with your identity, the target pod, and the timestamp.

This is the only moment in the entire flow where your identity exists in a structured, machine-readable form. Miss it, and it is gone.

API Server to Kubelet: The Dropped Identity

The API server now needs to reach the kubelet on the node where the pod is running. It does this over HTTPS, using its own client certificate and not your credentials. Your identity, sitting in the request context, is used for nothing further.

func (r *ExecREST) Connect(ctx context.Context, name string,

opts runtime.Object, responder rest.Responder) (http.Handler, error) {

// ...

location, connInfo, err := pod.ExecLocation(

ctx, r.Store, r.KubeletConn, name, execOpts,

)

// ...

return newThrottledUpgradeAwareProxyHandler(

location, transport, false, true, responder,

), nil

}

Source: subresources.go

ExecLocation uses the context only to find which kubelet node

the pod is running on. It returns a *url.URL, a plain network

address. The proxy handler is built from this URL and the kubelet

transport. Neither carries any trace of who made the original

request. The context, and the identity inside it, is not passed

forward.

Kubelet to CRI: The Protocol Has No Room for Identity

Once the kubelet receives the request, it already knows which pod and container to invoke. The kubelet and the container runtime (containerd, typically) live on the same node and communicate over a Unix socket using gRPC. This is where the exec request crosses into the runtime layer.

The message kubelet sends is defined by the CRI protobuf:

message ExecRequest {

string container_id = 1;

repeated string cmd = 2;

bool tty = 3;

bool stdin = 4;

bool stdout = 5;

bool stderr = 6;

}

source: api.proto

Six fields. Container ID, command, a TTY flag, and three stream flags. Identity is not one of them, not as an optional field, not as metadata, not anywhere. The schema has no room for it.

containerd registers the session and returns an ExecResponse

containing a URL for the streaming endpoint. The kubelet passes

this URL back to the API server, which uses it to proxy the stream

between the client and the runtime.

containerd calls runc, which spawns the process inside the

container’s existing namespaces. runc has no identity information

to inject. Nothing in the chain above it ever carried it this far.

The process is visible. The command is visible. What is not visible is who asked for it. Within the runtime’s boundary, there is no attribution.

The Connection Upgrade: Everyone Becomes a Proxy

Once the stream URL is passed back up the chain to the API server, it sends an HTTP 101 back to the client. The connection upgrades. From this point, the API server is a proxy forwarding raw bytes between the client and the runtime streaming endpoint. There is no HTTP anymore. There are no headers. There is no request context. There is just the stream.

The Gap in Practice

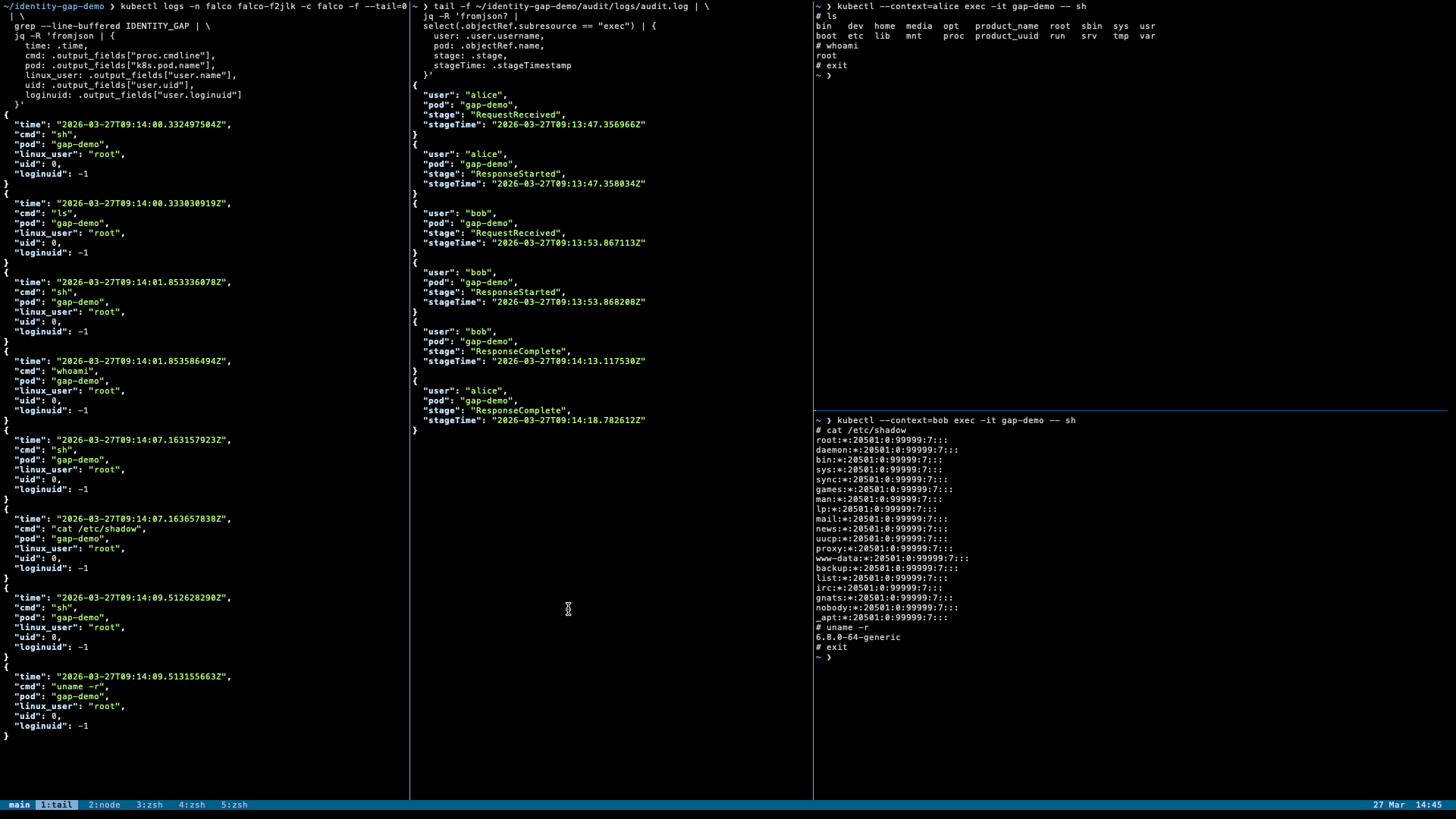

The following demonstration runs two simultaneous exec sessions into the same pod and attempts to attribute a command to a specific user using the Kubernetes audit log and a runtime security agent. It cannot be done.

Setup

The cluster is a local kind cluster with Kubernetes audit logging enabled, writing to a local file. Two users, alice and bob, both hold valid certificates and have RBAC permission to exec into pods. Falco runs on the cluster with a single custom rule:

- rule: IDENTITY GAP - Interactive command in container

condition: >

evt.type = execve and

proc.pname in (bash, sh, zsh, ash, dash, ksh) and

proc.tty != 0 and

k8s.pod.name = "gap-demo"

output: >

IDENTITY_GAP

{"cmd": "%proc.cmdline",

"pod": "%k8s.pod.name",

"namespace": "%k8s.ns.name",

"linux_user": "%user.name",

"uid": "%user.uid",

"parent": "%proc.pname",

"time": "%evt.time"}

priority: WARNING

tags: [identity_gap]

This rule captures every command spawned from an interactive shell session inside the target pod.

What the Audit Log Sees

Here is what was recorded when alice and bob opened exec sessions into the pod:

{

"user": "alice",

"pod": "gap-demo",

"stage": "RequestReceived",

"stageTime": "2026-03-27T09:13:47.356966Z"

}

{

"user": "alice",

"pod": "gap-demo",

"stage": "ResponseStarted",

"stageTime": "2026-03-27T09:13:47.358034Z"

}

{

"user": "bob",

"pod": "gap-demo",

"stage": "RequestReceived",

"stageTime": "2026-03-27T09:13:53.867113Z"

}

{

"user": "bob",

"pod": "gap-demo",

"stage": "ResponseStarted",

"stageTime": "2026-03-27T09:13:53.868208Z"

}

{

"user": "bob",

"pod": "gap-demo",

"stage": "ResponseComplete",

"stageTime": "2026-03-27T09:14:13.117530Z"

}

{

"user": "alice",

"pod": "gap-demo",

"stage": "ResponseComplete",

"stageTime": "2026-03-27T09:14:18.782612Z"

}

Kubernetes Audit Logs

RequestReceived fires when the request arrives. ResponseStarted

fires when the connection upgrades to a stream and the exec session

becomes live. ResponseComplete fires when the WebSocket closes

and the session ends.

The audit log knows exactly who opened each session and when. What it does not know is what happened inside those sessions.

What the Runtime Agent Sees

Here is what Falco captured when cat /etc/shadow was run inside

the pod:

{

"time": "2026-03-27T09:14:07.163657838Z",

"pod": "gap-demo",

"cmd": "cat /etc/shadow",

"parent": "sh",

"user.name": "root",

"user.uid": 0

}

Falco output capture

Falco knows the command, the pod, the time, and the parent process. It does not know who asked for it. The runtime never had that information to begin with.

The Question Neither Source Can Answer

Who ran cat /etc/shadow? It was run at 09:14:07. At that

moment, both alice and bob had active sessions in the same pod.

Neither had closed their connection yet.

Both sessions are open at the moment the command runs. Time based correlation only works when sessions do not overlap. Here they do. The audit log knows who opened sessions. Falco knows what was run. Neither source can tell you who ran this specific command.

Both log sources are working exactly as designed. The gap is not in the tooling. When two exec sessions overlap, the question of who ran a specific command inside a pod cannot be answered from any data available at this layer.

Existing Workarounds: So Close Yet So Far

Teleport

Teleport sits as a proxy in front of the Kubernetes API server and records interactive exec sessions as PTY streams. For interactive sessions, you can replay exactly what was typed and attribute it to the user who opened the session.

This does not close the gap described in this post. Teleport operates at the PTY layer, above the runtime. A process spawned non-interactively, a background job, or any kernel-level event that does not produce terminal output is invisible to it. The structural gap where identity never reaches the runtime layer remains open beneath it.

Gatekeeper

Gatekeeper operates as an admission controller, meaning it can

only evaluate requests at the point they arrive at the API server.

It can enforce policies on the initial exec command. For example,

allowing kubectl exec pod -- ls /some/path but blocking

kubectl exec pod -- bash. This prevents interactive shell access

for users who should only run specific commands.

But this only covers the initial command passed at exec time. Once the connection upgrades to a stream, Gatekeeper has no visibility. It cannot see what happens inside an interactive session. It also cannot prevent a determined user from wrapping a shell inside an allowed command. The admission layer is a gate, not a camera.

A Shot at Bridging the Gap

The gap exists because identity lives at the HTTP layer and process attribution lives at the kernel layer. Any real solution has to bridge that gap in one of two directions. Either push identity down to the runtime, or pull process information up to the audit layer.

Push Identity Down

The most complete fix would be to add an identity field to the

ExecRequest protobuf. Kubelet would receive the caller identity

from the API server and pass it through to containerd, which could

then set it as metadata before spawning the process. A runtime

security agent could read it directly at execve time.

This requires changes at every layer: the CRI protobuf, kubelet, containerd, CRI-O, and a formal Kubernetes Enhancement Proposal. It is the architecturally complete solution and the most invasive one. No KEP exists for this today.

Pull Process Information Up

The more targeted direction works in reverse. containerd already

knows the host PID of the spawned process. It just created it

via runc. That information exists at the moment ExecResponse is

returned. It just never goes anywhere.

Adding a single field to ExecResponse:

message ExecResponse {

string url = 1;

int64 host_pid = 2;

}

Suggested protobuf structure

would allow containerd to pass the host PID back up to kubelet,

which passes it to the API server, which includes it in the

ResponseStarted audit event. The audit log entry would then

contain the node and host PID alongside the caller identity.

A runtime security agent capturing execve events already knows

the host PID and the node. With this one additional field in the

audit log, attribution becomes a direct lookup. No timestamps.

No overlap problem. No ambiguity. Alice opened the session that

spawned PID 12345. PID 12345 ran cat /etc/shadow. Alice ran

cat /etc/shadow.

This requires one new protobuf field, a small kubelet change, and an audit event schema update. No changes below the CRI layer. The kernel does not need to know anything about Kubernetes identity.

Where This Leaves Us

Neither approach has a KEP filed. Neither has been built. Whether either direction is viable as a Kubernetes upstream change is an open question. It depends on whether the community considers runtime identity attribution a problem worth solving at the platform level.

This may not be an oversight. The Kubernetes project may have deliberately drawn a boundary between the control plane and the runtime, judging that identity attribution at the syscall level belongs to a higher layer or a separate tooling concern. That is a reasonable position. The gap exists either way.

References

- Falco issue #2895 — “Inject Kubernetes Control Plane users into Falco syscalls logs for kubectl exec activities”, filed October 2023. Independent validation of the gap described in this post.